You get to hear about algorithms daily. But have you wondered what exactly they are and what their purpose is? In layman’s terms, algorithms are step-by-step instructions when followed can help achieve the desired outcome. This is to say, that you can solve your problems using algorithms. At first, “algorithm” may seem a relatively new term, as it was popularized when the computer was introduced. In reality, it has a history dating to the times of Ancient Greece, where the first algorithm was captured.

Several complex algorithms have been developed each day since technological advancement. In this analysis, we will look at the advantages and disadvantages of one such algorithm, quick sort.

As the name suggests, this algorithm is used for sorting. It uses the approach of divide and conquer to solve the problem at hand. Using this algorithm, you can learn to analyze the worst, average, and best-case scenarios. Now let’s look at its pros and cons to understand what quick sort is all about.

Comparison Table of the Advantages and Disadvantages of Quick Sort

| Advantages | Disadvantages |

|---|---|

| Fast and efficient | Unstable |

| Cache-friendly | Worst-case time complexity (O(n^2)) |

| Space complexity (in-place sorting) | Dependent on pivot selection |

| Predictable average-case performance | Limited suitability for small datasets |

| Adapts well to many data types | Less suitable for linked lists |

| Reduced auxiliary space requirements | Recursive implementation can lead to stack overflow |

| Widely used in practice | Doesn’t guarantee stable sorting |

| Efficient for large datasets | Requires careful pivot selection for optimal performance |

| No additional data structures required | Performance degradation with already sorted data |

| No additional memory overhead | Suboptimal performance with equal elements |

| Minimizes comparisons | Difficult to implement for non-comparable data types |

| Supports parallel processing | Complexity in the implementation compared to simpler algorithms |

| Good general-purpose sorting algorithm | Potential security vulnerabilities with maliciously crafted input data |

| Time complexity O(n log n) on average | Poor performance with highly repetitive data patterns |

| Can be optimized with different pivot selection strategies | Not as intuitive as some other sorting algorithms |

| Degrades gracefully with nearly sorted data | Not suitable for external sorting of large files |

| Efficient with randomized input data | Requires additional memory for the call stack in recursive implementations |

| Good trade-off between average-case and worst-case performance | Cache performance is sensitive to the choice of pivot |

| Leverages divide-and-conquer strategy |

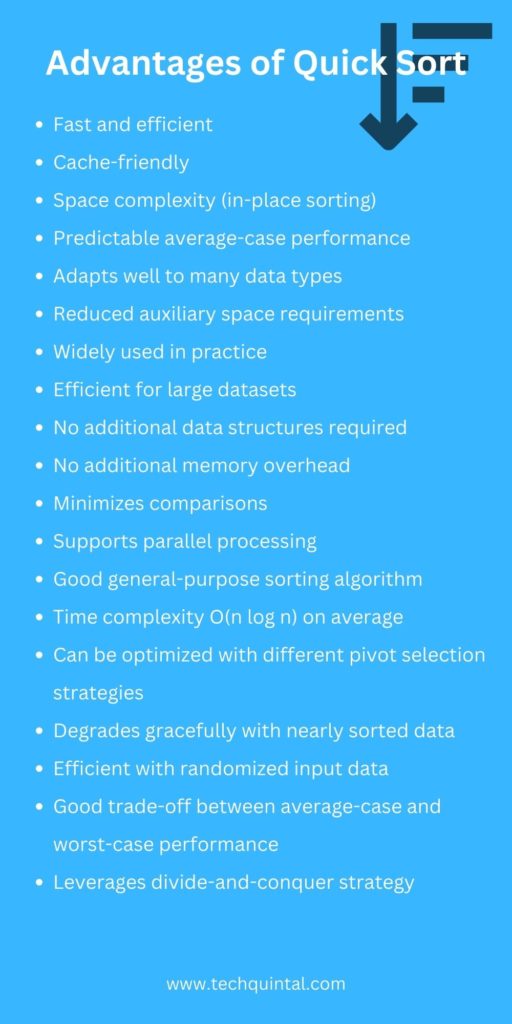

Advantages of quick sort

The quick sort algorithm allows you to solve your problems speedily. This makes it an efficient sorting algorithm. The following are the advantages of quick sort in detail.

1. Quick Sort is Fast and efficient

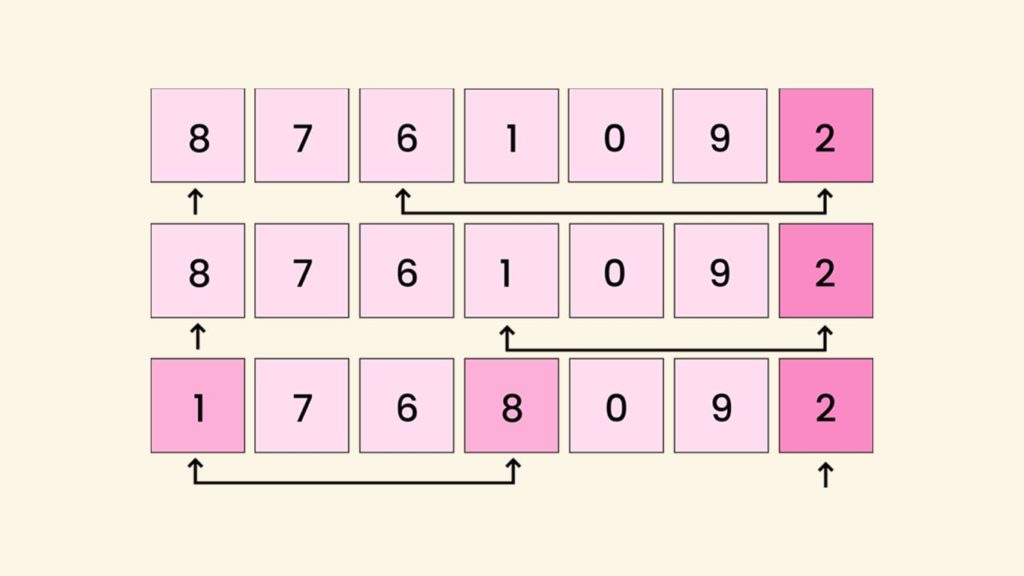

Quick sort is the most favored users choice to perform sorting functions quickly and efficiently. It allows users to achieve the same result much quicker than other sorting algorithms. Suppose you have a list of items you need to classify. Using this algorithm, you can select any value from the list, which will act as a pivot. It will divide the list into two categories.

Similarly, all categories will be further divided using pivots. You will then be left with small sections that cannot be split. You can now sort these sections and get an ordered list. This may sound simple at first, as the algorithm makes an element a pivot to partition the array. Eventually, the algorithm is applied to each partition recursively to sort the array. It has proved to be especially useful when sorting a huge list of items.

2. Cache friendly

The most talked-about feature of quicksort is its in-place sorting. This means, at the time of sorting, the algorithm doesn’t need additional storage space. Thus, the sorted list occupies the same storage as the unsorted list, and the sorting takes place in the given area. Compare this with other sorting algorithms, where the auxiliary array is required.

In that case, quick sort has the upper hand over other algorithms in the same category. Also, the quick sort algorithm is faster in an environment like virtual memory, as it manifests good cache locality.

3. Time complexity

Time complexity is the time taken by the algorithm to run until its completion. Compared to other sorting algorithms, it can be said that the time complexity of quick sorting is the best. To understand its time complexity, let’s look at best-case, average-case, and worst-case classifications.

A. Best-case time complexity

The case of quick sort is deemed the best case when the mean element is selected as the pivot. We know the pivot divides the array, and when a mean element is chosen as the pivot, the array is separated into equal subarrays. The height of the tree is minimum and in this case, the number of partitioning levels is log2 n. Therefore, the quick sort’s best-case time complexity is O(n log n).

B. Average-case time complexity

The case of quick sort is considered to be the average case when we don’t get evenly balanced partitions, and its average-case time complexity is O(n logn). You may already guessed the next scenario.

C. Worst-case time complexity

The case of quick sort is regarded to be the worst-case when the element selected as the pivot is either the smallest or largest element in the list. This usually happens when the list is almost sorted. When the chosen pivot is the largest element, the array is divided into n-1.

The partitioning won’t be equal, so there will be a significant difference in tree height. The partitioning levels is n and the effort is n, n-1, n-2, ... and so on. Ergo, the quick sort’s worst-case time complexity is O(n2).

4. Space complexity

Quick sort has a space complexity of O(logn), making it a suitable algorithm when you have restrictions in space availability. You might have figured out that space complexity is the memory space required by the algorithm to solve problems. This is to say that the space complexity of quick sort will be in the order of N as no container is created. Instead, the given array is used.

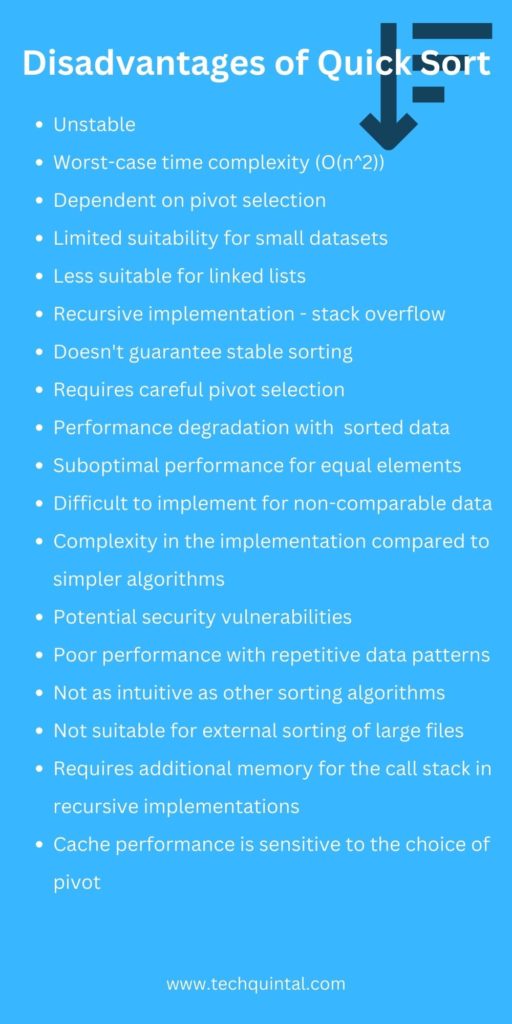

Disadvantages of quick sort

Each algorithm has benefits and drawbacks, and so does quicksort. The following are two downsides of the quick sort algorithm.

1. Quick Sort is Unstable

Quick sort is undoubtedly a fast and efficient sorting algorithm, but when it comes to stability, you might want to reconsider your options. This sorting algorithm is regarded as unstable as it does not retain the original order of the key-value pair. Whereas in stable sorting algorithms, even though the elements are sorted, the original order of the key-value pair is memorized.

In other words, quick sort won’t preserve the order of elements while reordering. Therefore, there is a possibility that the two same elements in the unsorted list can be reversed when displaying the sorted list.

2. Worst-case time complexity

In the above section, we saw the worst-case time complexity in detail. This is actually a drawback of quick sort. It usually occurs when the element selected as the pivot is the largest or smallest element, or when all the elements are the same. These worst cases drastically affect the performance of the quick sort.

Selecting a nearly sorted array can also result in StackOverflowException, where the recursion will have to go deep as the array is large. There are ways to tackle this situation, and one such way is shuffling. Shuffling introduces randomness in elements, which in turn helps the quick sort algorithm perform better.

Benefits of Quick Sort Over Other Sorting Algorithms

The benefits of Quick Sort over other sorting algorithms include its efficiency, as it is one of the fastest sorting algorithms for large datasets due to its average time complexity of O(n log n). It performs particularly well on randomized data and doesn’t require additional space, making it a space-efficient in-place sorting algorithm.

Moreover, Quick Sort’s divide-and-conquer approach can be parallelized for multiprocessor systems, further enhancing its performance.